The making of the App

Colour app

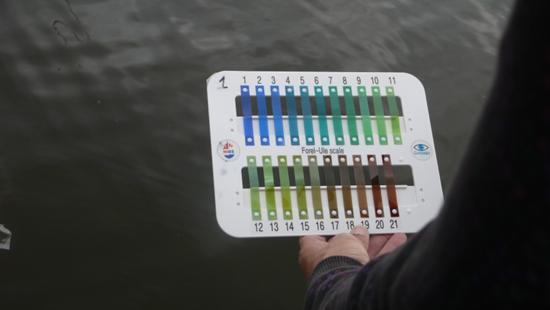

The goal of this prototype is to develop an app so that the end user can take data on the color you are seeing the seawater. This measurement is made by comparing the captured image from the camera smartphone with Forel-Ule (FU) scale. The FU scale is a method to approximately determine the color of bodies of water, used in limnology and oceanography. Using a color palette is produced in a series of numerically designated vials (1-21) (see Figure 1) which is compared with the color of the water body.

Figure 1 Plastic FU scale

Using the camera as a tool for collecting scientific data, there are many technical and environmental parameters that can distort image data. One of the objectives of this prototype is to standardize the use of the camera and to control environmental parameters so that pictures have maximum quality for the project and provide as much data as possible using current technology.

Specifications of the development

Considering to the characteristics of the prototype and upon such conditions that there should be the measurements, we propose the following initial settings:

- Orientation in making photography Photographic Orientation

For a correct picture-taking, we must analyse the position of the camera relative to the sunlight and focus and camera orientation relative to the plane of the water. - White balance

The control of the white balance is necessary to obtain the colour of the water as close to reality as possible. Since the meteorological conditions can affect the colour captured by the camera, we included two settings allowed by Android, one for cloudy conditions and the other for (Day light cloudy) clear days (Day light). Bands FU on the screen before taking the picture. After noticing that what you see through the screen sometimes is different from the photograph is then removed, you must study how to put the scale on the screen when you're looking through it into the water, and that selecting the color that most closely matches the water, take a picture automatically. - Color Bands FU

Being able to change the RGB values of the bands, and to adjust the colors as seen in the field. We will study both the colors and the number of bands are loaded dynamically when the application, from a configuration file (could call bands.txt). This file reads color coordinates of the n bands: R1, G1, B1; R2, G2, B2;...; Rn, Gn, Bn. In this way to change the colors and number of bands would require changing only file. It proposes to do this configuration file in text format because that if you want to experience different bands and colors with a simple text editor (Notepad type) at this early stage, it may make as many tests as you like. - Number of bands

We will also consider changing the number of bands.

We will work on creating a photo library of different water colors for the different FU coloursto help the user compare the observed colour in the field with real photographs acquired under different lighting conditions of the correspondent FU bar selected. The current prototype has 21 coloured bars but we will establish in the future if the scale has too many colours.

There is also an option to indicate whether each band can be visible or not to provide more options to the user during the initial evaluation phase. This has been proposed for two reasons: first because it would diminish the possibilities of change the values, and we would have to coordinate technologies and servers to locate the file / web service that will provide the RGB values to the application. Secondly, because it is a subject that will needs more detailed analysis once the first prototype is tested, since for example, it different devices or type of devices will use different RGB values.

Description

To develop the Color Measurement App, we used Android Developer Tools (ADT) and the Android SDK for API Libraries and Developer Tools. We used two sensors to obtain the best position / location of the device:

- First, the location for the metadata to inform the position from which the measurement was made.

- Secondly, we have used the gravity sensor and the magnetic field to measure the degrees of rotation about a fixed point defined previously, and degrees of tilting the device on the ground. With these data we are able to help the user to position the device correctly to take measurements as accurately as possible.

Fluorescence app

The overall objective is to carry out fluorescence measurements of water samples, which are controlled by a mobile phone app. The current working environment includes a water sample in a cuvette, in which fluorescence of dissolved and particulate matter is excited and recorded with internal smartphone elements, as are the integrated LED, and the internal camera.

The specific objective of this App prototype is to serve as a test environment, to determine, which factors influence the quality of the measurement. Requirements were the ability to adjust the relevant internal smartphone parameters for measurements, such as light intensity, duration, white balance, etc.

Specifications of the development

Regarding uniformity of data of all smartphones on the market cameras is impossible, it was considered the possibility of approaching the solution from the point of view of getting standardize the conditions of use for any camera. This involves studying the operation of the camera, flash, automatic settings and the device balances, etc. Initially, it can be said that the amount of light (photons) that the object to be photographed is capable of receiving is more important than the flash lighting time.

This app mainly consists in controlling the LED flash light (i.e. to turn it on or off) and checking the camera settings (e.g., the exposure time):

We decided to use a native application (an application for the platform), as we have specific functions.

We decided not to use specific drivers for the camera, because although we can control the low-level parameters, this is a problem in the future, as the number of devices you could use would be greatly reduced. The tests to be performed with AppLED, will be the use of different parameters for their relevance and influence on measurement results.

Description

AppLED has an initial screen (see Figure 2) from which to offer the following options:

- Image preview

We can see the image that we photographed by camera optics built into the Smartphone. - Duration of illumination

In the middle we have a status bar that allows us to adjust the flash exposure milliseconds. Ranges from 50-1500 milliseconds and simply move in a very easy and intuitive finger moving "point highlighted". - Filters and settings

The app shows the screen filters and settings with which we will take a picture at all times. - Parameters specifications

To change these parameters (see Figure 2), access the menu WFS to help us improve or change the quality of the photo, as shown in the following screenshots.

Figure 2 Initial screen of AppLED